Breaking News

Foreign Solar Giant Wipes Out Arizona Rancher - This Is Legal?!

Foreign Solar Giant Wipes Out Arizona Rancher - This Is Legal?!

When a tornado hits in 2027...

When a tornado hits in 2027...

That is not a real fish. IT'S A ROBOT.

That is not a real fish. IT'S A ROBOT.

The 13th Month They Deleted Why the World Switched to 12

The 13th Month They Deleted Why the World Switched to 12

Top Tech News

Robot Dives 1.5 Miles, Maps French Shipwreck With 86,000 Images And Recovers Artifacts

Robot Dives 1.5 Miles, Maps French Shipwreck With 86,000 Images And Recovers Artifacts

Brain-inspired chip could reduce AI energy use by 70%

Brain-inspired chip could reduce AI energy use by 70%

"This is the first synthetic species," microbiologist J. Craig Venter told 60 Minutes'

"This is the first synthetic species," microbiologist J. Craig Venter told 60 Minutes'

Humanoid robots are hitting the factories at an increasing pace

Humanoid robots are hitting the factories at an increasing pace

Microsoft's $400 Billion Mistake Is Now a $200 Phone With Zero Tracking

Microsoft's $400 Billion Mistake Is Now a $200 Phone With Zero Tracking

Turn Sand to Stone With Vinegar. Stronger Than Steel. Hidden Since 1627

Turn Sand to Stone With Vinegar. Stronger Than Steel. Hidden Since 1627

This is a bioprinter printing with living human cells in real time

This is a bioprinter printing with living human cells in real time

The remarkable initiative is called The Uncensored Library,...

The remarkable initiative is called The Uncensored Library,...

Researcher wins 1 bitcoin bounty for 'largest quantum attack' on underlying tech

Researcher wins 1 bitcoin bounty for 'largest quantum attack' on underlying tech

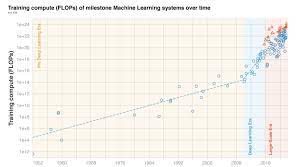

Three Eras of Machine Learning and Predicting the Future of AI

They show :

before 2010 training compute grew in line with Moore's law, doubling roughly every 20 months.

Deep Learning started in the early 2010s and the scaling of training compute has accelerated, doubling approximately every 6 months.

In late 2015, a new trend emerged as firms developed large-scale ML models with 10 to 100-fold larger requirements in training compute.

Based on these observations they split the history of compute in ML into three eras: the Pre Deep Learning Era, the Deep Learning Era and the Large-Scale Era . Overall, the work highlights the fast-growing compute requirements for training advanced ML systems.

They have detailed investigation into the compute demand of milestone ML models over time. They make the following contributions:

1. They curate a dataset of 123 milestone Machine Learning systems, annotated with the compute it took to train them.

CANCER HAS BEEN CURED

CANCER HAS BEEN CURED