Breaking News

DON MESS WITH ME Trump says Iran will 'be blown off face of earth' if they attack US warship

DON MESS WITH ME Trump says Iran will 'be blown off face of earth' if they attack US warship

Digital ID: Treasury Secretary Scott Bessent Reveals Trump Plans To Sign Executive Order To Hand...

Digital ID: Treasury Secretary Scott Bessent Reveals Trump Plans To Sign Executive Order To Hand...

Pardon or not, there's one very dire reason why Fauci must be charged quickly…

Pardon or not, there's one very dire reason why Fauci must be charged quickly…

The Spanish-US Spat Could Lead To NATO's Unraveling

The Spanish-US Spat Could Lead To NATO's Unraveling

Top Tech News

Robot Dives 1.5 Miles, Maps French Shipwreck With 86,000 Images And Recovers Artifacts

Robot Dives 1.5 Miles, Maps French Shipwreck With 86,000 Images And Recovers Artifacts

Brain-inspired chip could reduce AI energy use by 70%

Brain-inspired chip could reduce AI energy use by 70%

"This is the first synthetic species," microbiologist J. Craig Venter told 60 Minutes'

"This is the first synthetic species," microbiologist J. Craig Venter told 60 Minutes'

Humanoid robots are hitting the factories at an increasing pace

Humanoid robots are hitting the factories at an increasing pace

Microsoft's $400 Billion Mistake Is Now a $200 Phone With Zero Tracking

Microsoft's $400 Billion Mistake Is Now a $200 Phone With Zero Tracking

Turn Sand to Stone With Vinegar. Stronger Than Steel. Hidden Since 1627

Turn Sand to Stone With Vinegar. Stronger Than Steel. Hidden Since 1627

This is a bioprinter printing with living human cells in real time

This is a bioprinter printing with living human cells in real time

The remarkable initiative is called The Uncensored Library,...

The remarkable initiative is called The Uncensored Library,...

Researcher wins 1 bitcoin bounty for 'largest quantum attack' on underlying tech

Researcher wins 1 bitcoin bounty for 'largest quantum attack' on underlying tech

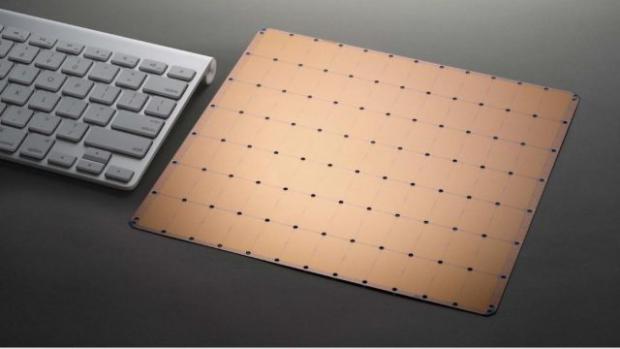

Future Wafer Scale Chips Could Have 100 Trillion Transistors

They had 0.86 PetaFLOPS of performance on the single wafer system. The waferchip was built on a 16 nanomber FF process.

The WSE is the largest chip ever built. It is 46,225 square millimeters and contains 1.2 Trillion transistors and 400,000 AI optimized compute cores. The memory architecture ensures each of these cores operates at maximum efficiency. It provides 18 gigabytes of fast, on-chip memory distributed among the cores in a single-level memory hierarchy one clock cycle away from each core. AI-optimized, local memory fed cores are linked by the Swarm fabric, a fine-grained, all-hardware, high bandwidth, low latency mesh-connected fabric.

Wafer-scale chips were a goal of computer great Gene Amdahl decades ago. The issues preventing wafer-scale chips have now been overcome.

In an interview with Ark Invest, the Cerebras CEO talks about how they will beat Nvidia to make the processor for AI. The Nvidia GPU clusters take four months to set up to start work. The Cerebras can start being used in ten minutes. Each GPU needs two regular Intel chips to be usable.

CANCER HAS BEEN CURED

CANCER HAS BEEN CURED