Breaking News

Is 'Project Freedom' Just Another Trump Scam?

Is 'Project Freedom' Just Another Trump Scam?

THEY LIED About the Water - THE WELLS ARE GOING DRY GLOBALLY

THEY LIED About the Water - THE WELLS ARE GOING DRY GLOBALLY

After Attack of Cargo Vessel, Trump Directs US to Escort Foreign Ships Through Hormuz

After Attack of Cargo Vessel, Trump Directs US to Escort Foreign Ships Through Hormuz

RED ALERT: "I Think That You're Gonna See Billions Dead At This Rate!"

RED ALERT: "I Think That You're Gonna See Billions Dead At This Rate!"

Top Tech News

Robot Dives 1.5 Miles, Maps French Shipwreck With 86,000 Images And Recovers Artifacts

Robot Dives 1.5 Miles, Maps French Shipwreck With 86,000 Images And Recovers Artifacts

Brain-inspired chip could reduce AI energy use by 70%

Brain-inspired chip could reduce AI energy use by 70%

"This is the first synthetic species," microbiologist J. Craig Venter told 60 Minutes'

"This is the first synthetic species," microbiologist J. Craig Venter told 60 Minutes'

Humanoid robots are hitting the factories at an increasing pace

Humanoid robots are hitting the factories at an increasing pace

Microsoft's $400 Billion Mistake Is Now a $200 Phone With Zero Tracking

Microsoft's $400 Billion Mistake Is Now a $200 Phone With Zero Tracking

Turn Sand to Stone With Vinegar. Stronger Than Steel. Hidden Since 1627

Turn Sand to Stone With Vinegar. Stronger Than Steel. Hidden Since 1627

This is a bioprinter printing with living human cells in real time

This is a bioprinter printing with living human cells in real time

The remarkable initiative is called The Uncensored Library,...

The remarkable initiative is called The Uncensored Library,...

Researcher wins 1 bitcoin bounty for 'largest quantum attack' on underlying tech

Researcher wins 1 bitcoin bounty for 'largest quantum attack' on underlying tech

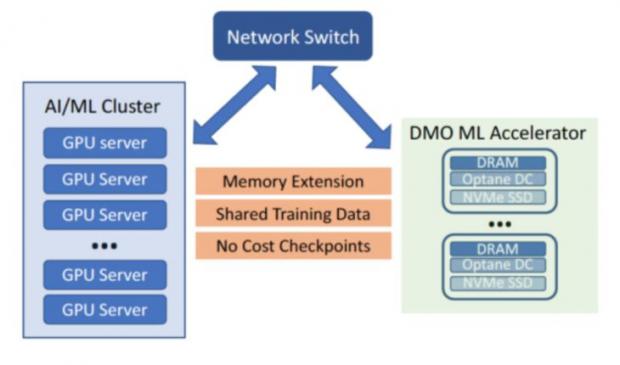

Beyond Big Data is Big Memory Computing for 100X Speed

This new category is sparking a revolution in data center architecture where all applications will run in memory. Until now, in-memory computing has been restricted to a select range of workloads due to the limited capacity and volatility of DRAM and the lack of software for high availability. Big Memory Computing is the combination of DRAM, persistent memory and Memory Machine software technologies, where the memory is abundant, persistent and highly available.

Transparent Memory Service

Scale-out to Big Memory configurations.

100x more than current memory.

No application changes.

Big Memory Machine Learning and AI

* The model and feature libaries today are often placed between DRAM and SSD due to insufficient DRAM capacity, causing slower performance

* MemVerge Memory Machine bring together the capacity of DRAM and PMEM of the cluster together, allowing the model and feature libraries to be all in memory.

* Transaction per second (TPS) can be increased 4X, while the latency of inference can be improved 100X

CANCER HAS BEEN CURED

CANCER HAS BEEN CURED